In this article, you’ll learn how you can get metadata from your pipeline run inside your pipeline’s notebooks (or other activities). The documentation on this subject is relatively limited, so I hope it will prevent a lot of wasted time.

In Airflow, you have macros, to refer to the metadata of a pipeline run. In Kubeflow, you have variables on various levels. In Azure Synapse (and in Data Factory), you have the windowEndTime and windowStartTime system variables of the Tumbling Window trigger. To get them from the pipeline into a Synapse notebook, you should take the following steps.

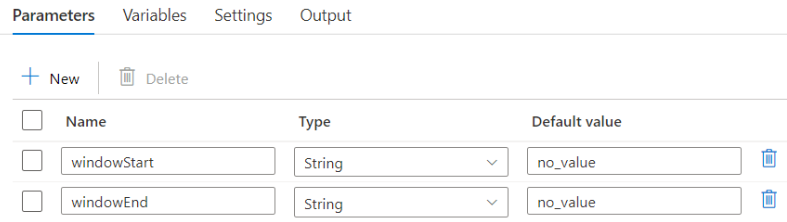

Set pipeline default parameters

First, specify the default values for both parameters, on the level of the pipeline. You can choose the names of both parameters, but keep them short and consistent as they will be referred to in a later step.

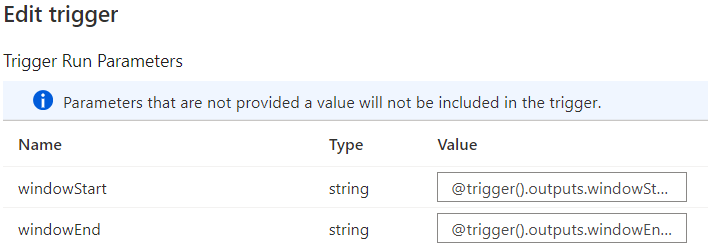

Set trigger run parameters

Next, create the trigger which will start the pipeline.

- Click Trigger > New/Edit

- Click the dropdown > Click ‘+ New’

- Select Tumbling Window and adequate recurrence

- When you submit, the next window will appear. Add the proper variables.

@trigger().outputs.windowStartTime @trigger().outputs.windowEndTime

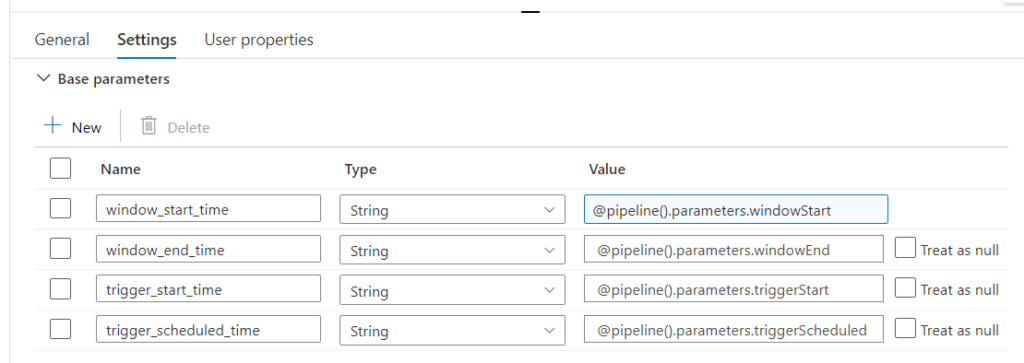

Set activity parameters

Finally, on the level of the (notebook) activity, you can set the base parameters. On the left hand side, you’ll set the names of your Python variables. On the right hand side, you can load them with the parameters from the pipeline level.

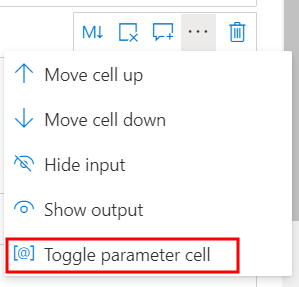

Use the parameters inside a notebook

Inside the notebook, you should create a cell where you add all the variables in which you want to store the window start and window end time. You should also specify a default value for when the parameters aren’t loaded (I set them to None).

window_start_time = None window_end_time = None

Finally, you should set the cell as a parameter cell. You can do this by clicking the three dots on the left hand side of the cell.

⚠️If you simply want to extract a date from your newly created variables, you should take the first 10 characters of these strings.

window_start_time = window_start_time[0:10] window_end_time = window_end_time[0:10]

Great success!